Abstract

AI has moved quickly from boardroom curiosity to operational pressure. GRC teams are being asked to reduce manual work, strengthen assurance, and do more with the same headcount. The problem is that generic AI can sound right while producing outputs that are hard to evidence, hard to explain, and impossible to defend in front of auditors, regulators, or the board.

This guide is to help provide some clarity and assurance for GRC professionals on what they should be doing with AI empowered work, that is defensible to regulators and the board. That means AI that works from real evidence, sits inside governed workflows, keeps humans in control, and produces traceable outputs.

Effective AI-enabled GRC starts with trust, not speed

AI is now firmly in focus for risk, compliance, audit, and governance teams. The pressure is coming from every direction: boards want further productivity, teams need to reduce manual work, and the volume of regulatory change, evidence requests, third-party data, control documentation, and reporting is only increasing.

But in GRC, speed is not enough.

A compliance output still needs to be owned. Controls have to be mapped correctly. A board report still needs to stand up to challenge. A regulator will not accept “the AI said so” as a defense.

That is why effective AI-enabled GRC has to start with trust, reliability, and evidence. The question is not simply whether AI can generate an answer. It is whether your team can understand it, verify it, and stand behind it.

This is already becoming a practical priority. Boston Consulting Group has found that risk and compliance is the top support function for AI and GenAI adoption, ahead of functions such as IT/Tech. BCG also argues that risk and compliance functions need to manage AI-generated risks while applying AI to improve risk processes and decision-making.

“In GRC, the question is not just whether AI can produce an answer. It is whether your team can stand behind that answer.”

Rich Eddolls, Chief Product Officer, CoreStream GRC AI strategy paper

The answer is not to slow AI down or pretend teams can opt out forever. In many organizations, that may now be the bigger operational risk. If GRC leaders do not create safe routes for AI adoption, teams will find their own workarounds.

That tension is already visible. Risky Women’s AI recap found that 89% of risk professionals see AI as a positive force, while 57% are worried about ethical and reputational risks, and 80% say organizations lack the skills and processes needed for AI threats.

So, the real challenge is not whether GRC teams should use AI. It is how they use it in a way that is trusted, governed, and defensible. AI that makes sense and truly works for your business.

Why is generic AI risky in GRC?

Generic AI tools can be useful. They can summarize, draft, and brainstorm. That can be effective especially for generic work.

But GRC is not just a writing exercise.

GRC depends on precision, context, evidence, and accountability. A confident answer is not the same as a reliable one. Relying on off-the-shelf LLMs in risk and compliance can create business risk, with typical output accuracy often sitting between 50% and 60%.

For risk, compliance, audit, and controls teams, the danger is not only that AI gets something wrong or hallucinates. It is that the wrong output enters a workflow, shapes a control decision, appears in a report, or becomes part of the evidence trail.

That is where generic AI can create real exposure.

The common failure points are clear:

- Incorrect control mappings

- Misaligned evidence validation

- Hallucinated justifications

- Inconsistent framework interpretation

- Overconfident summaries with no source links

- Data leakage through unapproved tools

- Shadow AI workarounds when internal policies are too rigid

- Weak accountability when nobody owns the AI-assisted decision

As a business leader, the issue is not that AI is “bad.” The issue is that generic AI is not automatically fit for governed GRC work.

A restrictive AI policy may feel safer, but it can create a different problem: Shadow AI. If teams are under pressure to meet deadlines and the approved process is too slow, people may turn to unapproved tools anyway. That means sensitive control data, vendor information, policies, incidents, or audit material could end up in tools that were never reviewed for privacy, security, or governance.

IBM’s 2025 Cost of a Data Breach research makes this risk hard to ignore. IBM found that AI adoption is outpacing security and governance, with 63% of breached organizations studied lacking AI governance policies and only 37% having approval processes or oversight mechanisms in place. IBM also reported that 1 in 5 organizations experienced a breach due to shadow AI

That matters because GRC teams are supposed to reduce uncertainty, not add another unmanaged layer of it.

So, the question is not “can this AI help?” It can.

The better question is: can this AI output be traced, reviewed, challenged, and defended?

If the answer is no, it is not ready for serious audit-proof GRC.

What does the “trusted and verified” AI definition actually mean in GRC?

Trusted AI in GRC is not defined by whether it uses the most famous model, the most advanced interface, or the most impressive demo. It is defined by whether your organization can prove how the output was created, what evidence it used, who reviewed it, and what decision followed.

That distinction matters. Even a highly accurate model is not automatically trustworthy in a GRC context if it cannot show its working. If an AI output is 99.9% accurate, the remaining risk still matters when that output supports a control assessment, third-party review, audit finding, or board report. The issue is not just whether the answer is likely to be right. It is whether the answer can be traced, tested, challenged, and corrected when it is wrong.

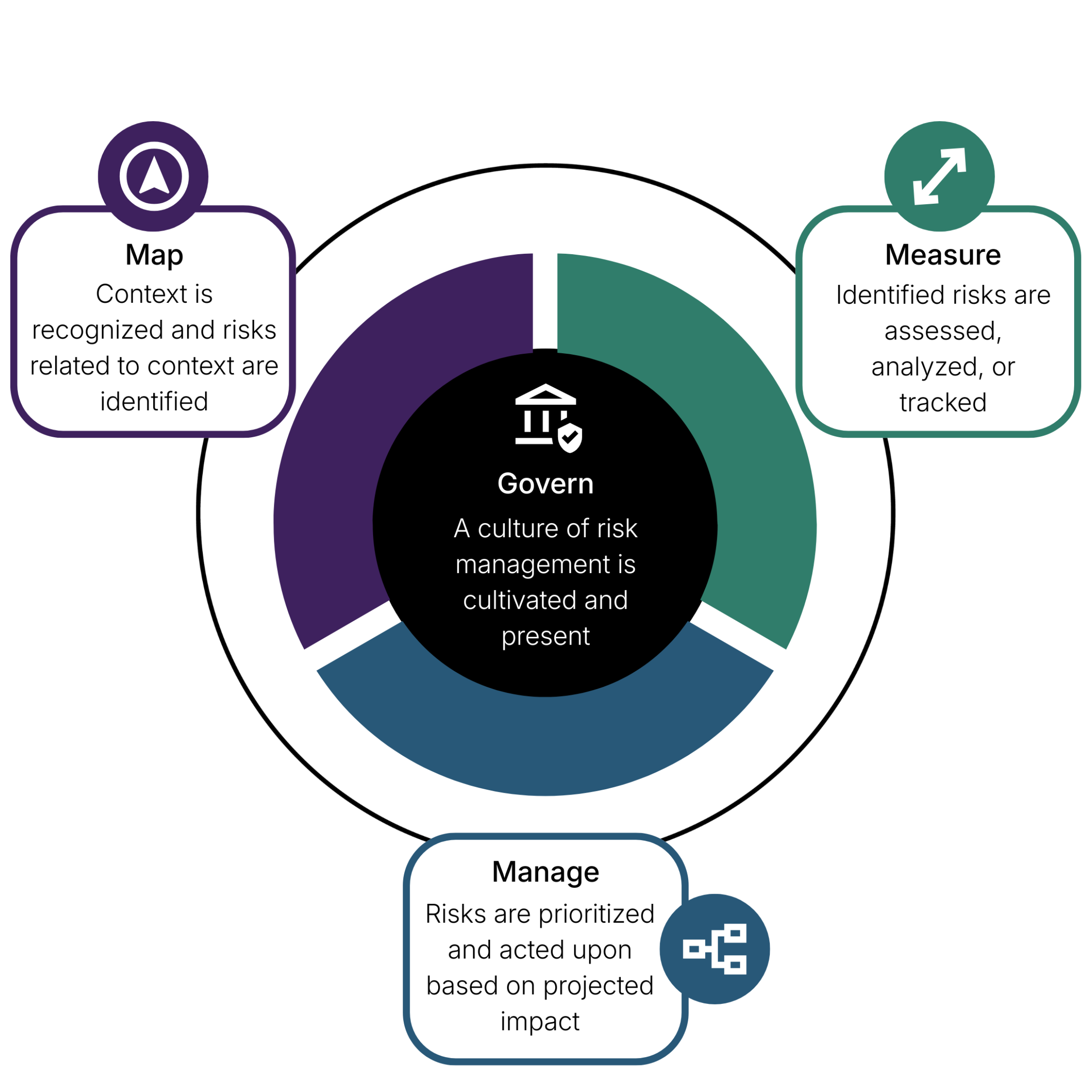

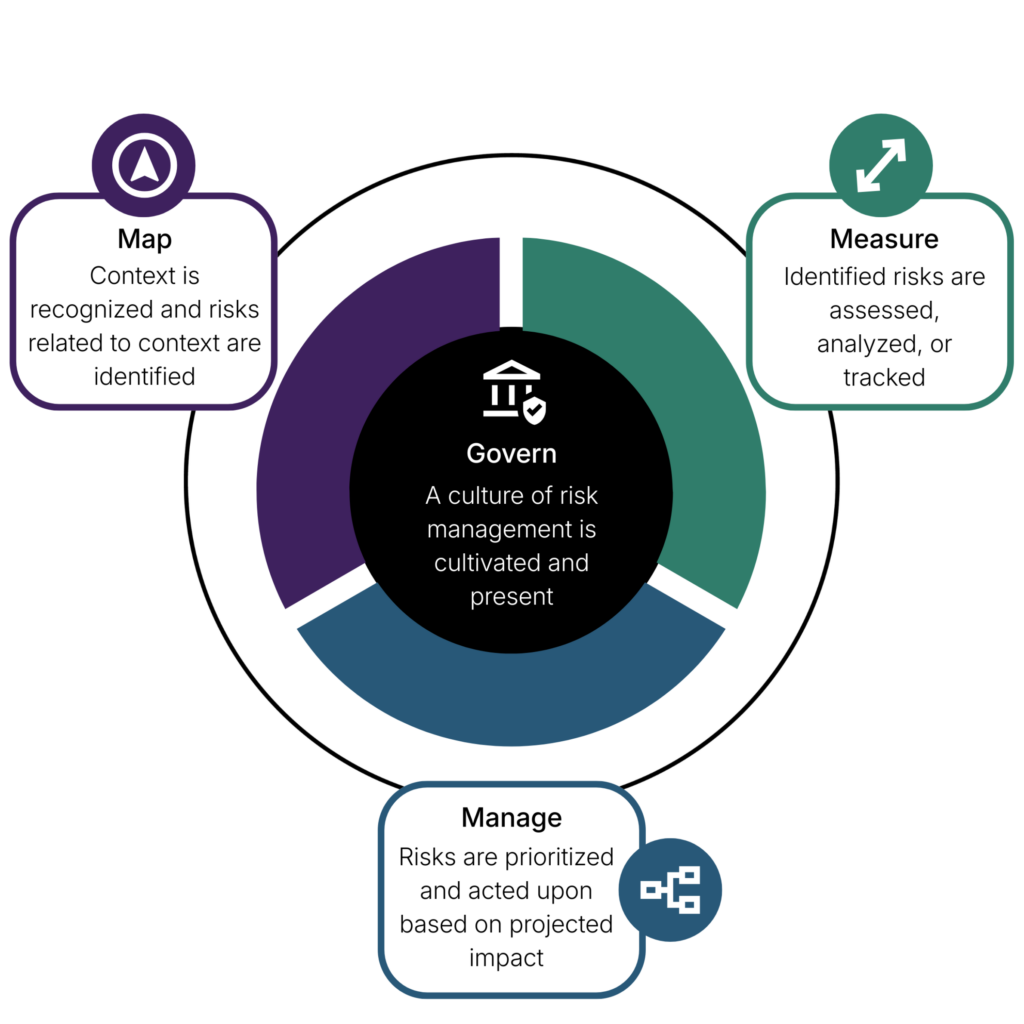

This is why the NIST AI Risk Management Framework is a useful reference point. NIST organizes AI risk management around 4 core functions:

Govern, Map, Measure, and Manage.

It also describes trustworthy AI as valid and reliable, safe, secure and resilient, accountable and transparent, explainable and interpretable, privacy-enhanced, and fair. Translating that into a plain English outcome, that means AI needs structure around it. It needs ownership, monitoring, evidence, and controls, not just a clever output.

McKinsey’s 2025 global AI survey reinforces why this matters in practice. It found that 51% of respondents from organizations using AI had already seen at least 1 negative consequence from AI, with inaccuracy among the most commonly reported issues. That is not a reason to avoid AI. It is a reason to govern it properly.

For GRC teams, trusted and verified AI should mean:

- It uses approved evidence sources, not open-ended guesswork

- It links outputs back to source documents

- It records review history and decision ownership

- It keeps human approval in place for high-impact decisions

- It works inside existing risk, compliance, audit, controls, and third-party workflows

- It can be tested, challenged, and explained

- It supports board and regulator scrutiny, not just internal productivity

It is pivotal to remember the difference between AI that helps a team appear to move faster and AI that empowers a team to move faster with confidence in the right direction.

Start the conversation with CoreStream GRC’s mini-framework

A practical way to start is with 5 simple tests. Before AI is embedded into a GRC workflow, teams should be able to answer these questions clearly.

“The 5 tests of trusted AI-enabled GRC”

- Evidence test

Can the output be traced to real documents, controls, or records?

- Ownership test

Who reviewed it, approved it, and owns the final decision?

- Governance test

Does the AI-supported process follow your internal AI, data, privacy, security, and risk policies?

- Workflow test

Does the output trigger action inside the GRC process, such as remediation, approval, escalation, reporting, or control review?

- Defensibility test

Could this output be shown to an auditor, regulator, board, or senior stakeholder with confidence?

These tests are deliberately simple. That is the point. Trusted AI-enabled GRC should not depend on vague confidence in a tool. It should be easy to ask: where did this come from, who checked it, what happens next, and can we defend it?

Why does human judgment in GRC still matter in the age of AI?

Used correctly in GRC, AI should reduce low-value admin, not remove accountability.

That distinction matters because GRC work is not just about producing outputs. It is about making judgments. Is this control good enough? Is this risk within appetite? Is this third party acceptable? Does this evidence actually prove what we say it proves? Should this be escalated?

AI can help teams get to those questions faster. It can summarize evidence, suggest control mappings, structure findings, and highlight gaps. But it cannot own the decision. It cannot understand the full business context, risk appetite, regulatory exposure, or political sensitivity in the same way an experienced GRC professional can.

“AI is powerful for automation, but its impact depends entirely on how it’s applied within each organization. Human judgment still leads the way, AI gives people the time to use it well.”

Paul Cadwallader, GRC Strategy Director, CoreStream GRC

One useful way to think about AI in GRC is to treat it like junior work. It can produce a first draft. It can spot patterns. It can organize information. It can accelerate research. But it still needs clear direction, review, challenge, and feedback.

A good manager would not take a junior colleague’s first draft and send it straight to the board. The same logic applies to AI.

The risk is not that AI helps people work faster. The risk is that teams stop asking whether the output is complete, balanced, evidenced, and fit for the decision being made.

That is where human judgment becomes more important, not less. As AI takes on more of the manual work, the value of the GRC professional moves toward challenge, interpretation, decision support, and system design. In other words, the human role becomes less about chasing information and more about deciding what the information means.

For high-impact GRC decisions, the better model is not “human in the loop” as a final rubber stamp. It is AI in the loop. The person owns the judgment. Humans own the heart of the decision and AI supports the process.

Where can AI create real value in GRC today?

The strongest AI use cases in GRC are not necessarily the flashiest. They are the repetitive, evidence-heavy, document-heavy workflows where teams spend huge amounts of time reading, mapping, checking, and rewriting. This is particularly valuable, when humans fatigue and begin to skim a 89 page document, versus AI thoroughly consuming every single word of the document in much less time.

Some examples could include

- Evidence intake and summarization

- Control description review

- Control testing plan generation

- Cross-framework mapping

- Regulatory change triage

- Third-party evidence review

- Vendor due diligence summaries

- Risk description enrichment

- Audit scoping support

- Policy and procedure Q&A using approved internal sources

- Continuous monitoring and exception detection

What should GRC teams avoid when adopting AI?

The wrong approach to AI-enabled GRC is usually easy to spot.

It starts with a tool, not a problem. It promises speed before it explains governance. It talks about accuracy, but cannot show the evidence behind the answer. It creates impressive-looking outputs, but leaves users unclear on how those outputs were produced, reviewed, approved, or corrected.

That is a problem in any business function. In GRC, it is a bigger one.

If AI is going to support compliance, controls, audit, risk, or third-party processes, it needs to be more than persuasive. It needs to be traceable, governed, and usable inside the real workflow.

Common red flags include:

- “AI-powered” claims with no evidence trail

- No source citations or document references

- No clear human approval gate

- No model, prompt, or workflow version history

- No data residency or privacy explanation

- No way to use your own internal AI environment or LLM

- AI outputs that sit outside the GRC workflow

- No testing on real, redacted artifacts before purchase

- No escalation route for poor or uncertain outputs

- AI that cannot be switched off or ringfenced

Related read: Our “magic button” compliance piece looks at why surface-level compliance tools can create false confidence and what leaders should be looking for instead.

This is also our AI strategy at CoreStream GRC, we believe technology should be an enabler, not a barrier, and so we respect our clients’ right to decide if AI should be included in their environment or not. AI should be used where it adds value, not applied everywhere by default. Our AI integrations are configured for specific use cases and are not embedded into the core platform, meaning clients can ringfence CoreStream GRC from AI where needed.

At the same time, we are continuously evaluating the AI GRC market and working with best-in-class partners where they can strengthen our community’s outcomes. The goal is not AI for the sake of AI. It is the right AI, in the right workflow, with the right controls around it.

Case study: What does intelligence-first GRC look like?

Intelligence-first GRC means AI is not bolted onto the side of a compliance process. It means AI is working from real evidence, inside real governance workflows, with outputs that can be reviewed, acted on, and defended.

That is the thinking behind CoreStream GRC’s partnership with SANNOS. SANNOS is an AI-native compliance engine built and supported by GRC veterans from Big 4 consulting and regulated industries.

It reads yours and your vendors’ assets such as policies, contracts, SOC reports, ESG documentation, and third-party documents, then maps them to frameworks, flags gaps, and generates audit-ready outputs with traceable citations back to the source text.

SANNOS also brings credibility where it matters. CoreStream GRC’s SANNOS integration content states that SANNOS delivers 98% accuracy and is the only AI platform accredited by the Secure Controls Framework. That gives GRC teams a stronger basis for using AI in serious compliance environments, where evidence, traceability, and defensibility matter more than speed alone.

“The problem with generic AI in compliance is that it can sound convincing without being defensible. Our approach is different. We work from real documentation and control evidence, so the output is grounded, explainable, and ready for serious review.”

Anders Søborg, Co-CEO, SANNOS

Inside CoreStream GRC, those AI-generated insights can then become part of the wider operating model: ownership, remediation, approvals, third-party workflows, reporting, and audit-ready records. That is the difference between AI as a chatbot and AI as part of a governed GRC process.

See it in practice: Watch the on-demand SANNOS x CoreStream GRC webinar to explore what intelligence-first GRC looks like inside real enterprise workflows.

Why does AI integration matter more than another AI tool?

A lot of organizations are not starting from zero with AI.

Many already have approved enterprise AI licenses, secured internal environments, client-owned LLMs, or clear IT policies around which tools can and cannot be used. In those cases, the question is not always “which AI tool should we buy next?”

The better question is: how do we connect the AI we already trust into the GRC processes that matter?

That is where integration becomes more valuable than another standalone AI tool.

If GRC teams are forced to copy content into a separate AI interface, review the output elsewhere, and then manually paste it back into their platform, the risk does not disappear. It just moves. Evidence becomes harder to trace. Review steps become less visible. Decisions become disconnected from the workflow they are supposed to support.

A better model is to bring approved AI into the governed environment where the work already happens.

That means AI can support tasks such as control review, evidence summarization, risk wording, third-party assessment, or policy queries, while the GRC platform continues to manage ownership, review, approvals, remediation, reporting, and audit history.

This is why CoreStream GRC takes an AI-agnostic approach. Some teams will want to use best-of-breed AI partners. Others will want to connect approved enterprise AI environments or client-owned LLMs. Others may want to keep AI switched off entirely for certain processes.

The value is in giving organizations that choice, while keeping the GRC workflow controlled.

Our client, Pets at Home gives us an example which shows what this can look like in practice. CoreStream GRC integrated AI into the client’s existing GRC workflow to support wording suggestions for reviews, reducing time-intensive manual creation and improving productivity. The AI did not sit outside the process. It supported the work inside it.

Our mission is to be the preferred and trusted GRC platform for enterprises worldwide by delivering intuitive, flexible solutions that drive efficiency and value, their way. This includes AI solutions.

“The point is not to force every organization into one AI model. The point is to connect seamlessly the right AI to the right workflow, with the right controls around it.”

Rich Eddolls, Chief Product Officer, CoreStream GRC

How should you evaluate AI-enabled GRC software?

GRC buyers need to test beyond the demo.

A polished AI answer means very little if the tool cannot show its source, route the output for review, or create an audit trail. The real test is not whether the AI sounds impressive. It is whether the output can be trusted inside a live risk, compliance, audit, controls, or third-party workflow.

Before adopting AI-enabled GRC software, ask:

- Can the AI work from approved internal documents and evidence?

- Does every output include citations or source references?

- Can humans approve, reject, amend, and explain AI-generated outputs?

- Is there a complete audit trail of prompt, source, reviewer, decision, and timestamp?

- Can the AI connect to existing workflows for remediation, approvals, ownership, and reporting?

- Can the platform integrate with client-owned LLMs or approved enterprise AI environments?

- Can AI be switched off, ringfenced, or limited by use case?

- How does the provider test accuracy, drift, bias, and hallucination?

- What data is used, where is it stored, and who can access it?

- Can you test the system using your own redacted evidence before purchase?

This is where SANNOS is a useful benchmark.

SANNOS is built and designed by ex-CROs for evidence-based compliance analysis, not generic language generation.

What does good AI-enabled GRC look like after implementation?

The goal is not a bigger technology stack. It is a stronger operating model.

Good AI-enabled GRC should make work faster, but also clearer. It should reduce the time teams spend chasing evidence, rewriting summaries, duplicating control mappings, and manually reviewing documentation. But the real value is what that time gives back.

It moves GRC teams closer to the work that actually needs their judgment: interpreting risk, challenging weak controls, reviewing exceptions, advising the business, and making better decisions.

That is the shift from box-checking to strategic assurance.

In practice, good AI-enabled GRC should help teams achieve:

- Faster evidence review

- More consistent control mapping

- Better third-party assessment quality

- Fewer manual questionnaires

- Reduced duplicated control work

- Clearer remediation ownership

- Stronger board and audit reporting

- Traceable, source-backed outputs

- Less dependence on spreadsheets and email

- More time for judgment, challenge, and improvement

The important point is that AI should not create a separate layer of work. It should strengthen the existing GRC process, providing that additional intelligence layer. Evidence should flow into review. Gaps should become actions. Actions should have owners. Decisions should be recorded. Reporting should reflect what is actually happening.

That is what makes AI useful in GRC. Not just faster answers, but better-controlled outcomes.

Where should GRC teams start?

GRC teams do not need to solve every AI question at once. The safer path is to start with specific, measurable use cases where manual effort is high and decision risk can be controlled.

Phase 1: Identify the pressure points

Start with the work that slows the team down today. Look for evidence-heavy, repetitive processes where people spend hours reading, checking, mapping, and chasing updates.

Good starting points include control reviews, third-party onboarding, audit preparation, regulatory change triage, policy reviews, and evidence summaries.

Third-party risk is one many clients begin with. Instead of relying on long questionnaires alone, AI can help review uploaded vendor documents, identify gaps, and suggest clarification questions for human review. The team still owns the decision, but the manual document review becomes faster and more consistent, and the vendor isn’t filling in 100s of questions for each of their suppliers.

Another example:

Prompting is becoming a practical GRC skill because a weak prompt creates weak outputs. A strong prompt sets the role, audience, evidence base, boundaries, and review point.

A simple prompt structure could look like this:

“Act as a compliance analyst. The audience is the Head of Risk. Review the text below and identify the key risks, evidence gaps, and follow-up questions. Use only the information provided. Do not make a final decision. Structure the output as: summary, risks, missing evidence, suggested follow-up questions.”

Then review it like junior work. Challenge it. Ask what it missed. Ask what evidence supports the answer. Ask whether the output is useful enough to move into a governed workflow.

Phase 2: Define what AI is allowed to do

Be clear about the boundaries. Decide where AI can summarize, suggest, classify, map, or draft, and where human approval must remain mandatory.

For example, AI may be allowed to suggest a control mapping, but not approve it. It may summarize vendor evidence, but not assign the final risk rating. It may draft a remediation action, but not close the issue.

Phase 3: Build the evidence and review trail

Every AI-supported output should have a trail behind it. Teams should be able to see the source evidence, review history, decision owner, approval status, and any changes made before the output was finalized.

Without that trail, AI becomes another black box and potential business risk. With it, AI becomes part of the control environment.

Phase 4: Test on real use cases

Generic demos are not enough. Use redacted internal artifacts where possible, such as policies, vendor documents, control evidence, audit findings, or regulatory updates.

The question is not whether the AI works in theory. It is whether it works with your data, your workflows, your risk appetite, and your review process.

Phase 5: Scale only where it proves value

Do not scale because the demo looked good. Scale because the use case has proven value, and you reviewed and trust the outputs.

Track practical measures like time saved, rework reduced, first-pass acceptance, evidence quality, reviewer confidence, and whether decisions are easier to explain.

That is how AI adoption becomes controlled, measurable, and useful. Not a one-off experiment

If you are already using AI, but want more control

The priority is to move from experimentation to structure.

That means deciding which AI tools are approved, where they can be used, what data can be shared, who reviews the outputs, and how those outputs become part of the audit trail.

CoreStream GRC’s AI datasheet sets out this approach as responsible AI you control: opt-in AI, best-of-breed partners, client-owned LLM options, client-specific Azure OpenAI, and the option to run without AI integrations where needed.

Partner choice matters too, because different AI use cases need different capabilities.

CoreStream GRC works with AI partners that support specific GRC outcomes:

Black Kite for third-party cyber risk management, helping automate assessments, map findings to frameworks, quantify risk, and reduce manual effort.

SANNOS for evidence-based compliance analysis, framework mapping, gap detection, and audit-ready outputs.

Xapien for modern due diligence, supporting automated gathering and reporting while keeping results available for triage, collaboration, and audit-ready records.

Signal AI for external risk and reputation intelligence, feeding alerts into CoreStream GRC so teams can score, route, and act through existing workflows.

CoreStream GRC’s role is to help these capabilities work inside the governance process, not outside it. AI should connect into risk management, audit management, compliance management, and approved business-owned LLMs with the governance and choice enterprise teams need.

The final question is simple: where will AI add value without weakening control?

That is what a workshop is for. We can help you review your current workflows, identify the right AI use cases, decide what should stay human-owned, and map how AI can be embedded safely into your existing GRC environment.

Want to start building AI-enabled GRC that your team can trust, verify, and defend?

FAQs on AI-enabled GRC

AI-enabled GRC is the use of artificial intelligence to support governance, risk, compliance, audit, controls, and third-party risk workflows. In practice, this can include evidence summarization, control mapping, policy Q&A, vendor document review, regulatory change triage, and audit preparation.

The key point is that AI should support the GRC process, not sit outside it. Strong AI-enabled GRC keeps evidence, ownership, review, approvals, remediation, and reporting connected in one governed workflow.

AI-enabled GRC needs to be trusted and verified because GRC outputs are used for serious decisions. A control mapping, audit finding, vendor risk assessment, or board report must be accurate, explainable, and defensible.

Generic AI can produce confident answers, but confidence is not the same as assurance. Trusted and verified AI should use approved evidence, cite its sources, keep humans in control, and create an audit trail that can stand up to review.

Generic AI can be useful for low-risk drafting, brainstorming, and summarizing. But it can create risk if used for compliance, audit, controls, or third-party decisions without governance.

The main risks include incorrect control mappings, hallucinated justifications, weak evidence validation, data leakage, inconsistent framework interpretation, and unclear ownership. For GRC teams, the problem is not just that AI may be wrong. It is that the wrong output may enter a workflow and influence a decision.

The 5 tests of trusted AI-enabled GRC are:

1. Evidence test: Can the output be traced to real documents, controls, or records?

2. Ownership test: Who reviewed it, approved it, and owns the final decision?

3. Governance test: Does it follow internal AI, data, privacy, and risk policies?

4. Workflow test: Does it trigger action, remediation, approval, or reporting inside the GRC process?

5. Defensibility test: Could this be shown to an auditor, regulator, or board?

These tests help teams separate useful AI from risky automation.

Intelligence-first GRC means AI works from real evidence inside real governance workflows. The goal is not to generate a polished answer. The goal is to produce an output that can be reviewed, acted on, and defended.

In an intelligence-first GRC model, AI can read evidence, map requirements, identify gaps, and support reporting. The GRC platform then turns those outputs into ownership, remediation, approvals, reporting, and audit-ready records.